Artificial Intelligence (AI) is reshaping industries, powering everything from recommendation engines on streaming platforms to advanced medical diagnostics. While AI might sound complex, training an AI system follows a structured process that beginners can understand and even start experimenting with. This guide will walk you through the step-by-step process of training AI, breaking down technical jargon into simple, actionable insights.

Step 1: Understand the Basics of AI and Machine Learning

Before jumping into training, it’s important to grasp the core concepts:

- Artificial Intelligence (AI): A broad field focused on creating systems that can perform tasks requiring human intelligence.

- Machine Learning (ML): A subset of AI where systems learn from data instead of explicit programming.

- Deep Learning: A type of ML that uses neural networks to analyze data and make decisions.

Key takeaway: To train AI, you need data, an algorithm (model), and a way to evaluate performance.

Step 2: Define the Problem You Want AI to Solve

AI is only useful when applied to a specific problem. Ask yourself:

- What is the goal? (e.g., predict customer churn, recognize images, recommend products)

- What type of AI is needed? (classification, regression, clustering, NLP, etc.)

- What outcome will define success? (accuracy, reduced costs, better user experience)

By defining your problem clearly, you set the foundation for choosing the right model and dataset.

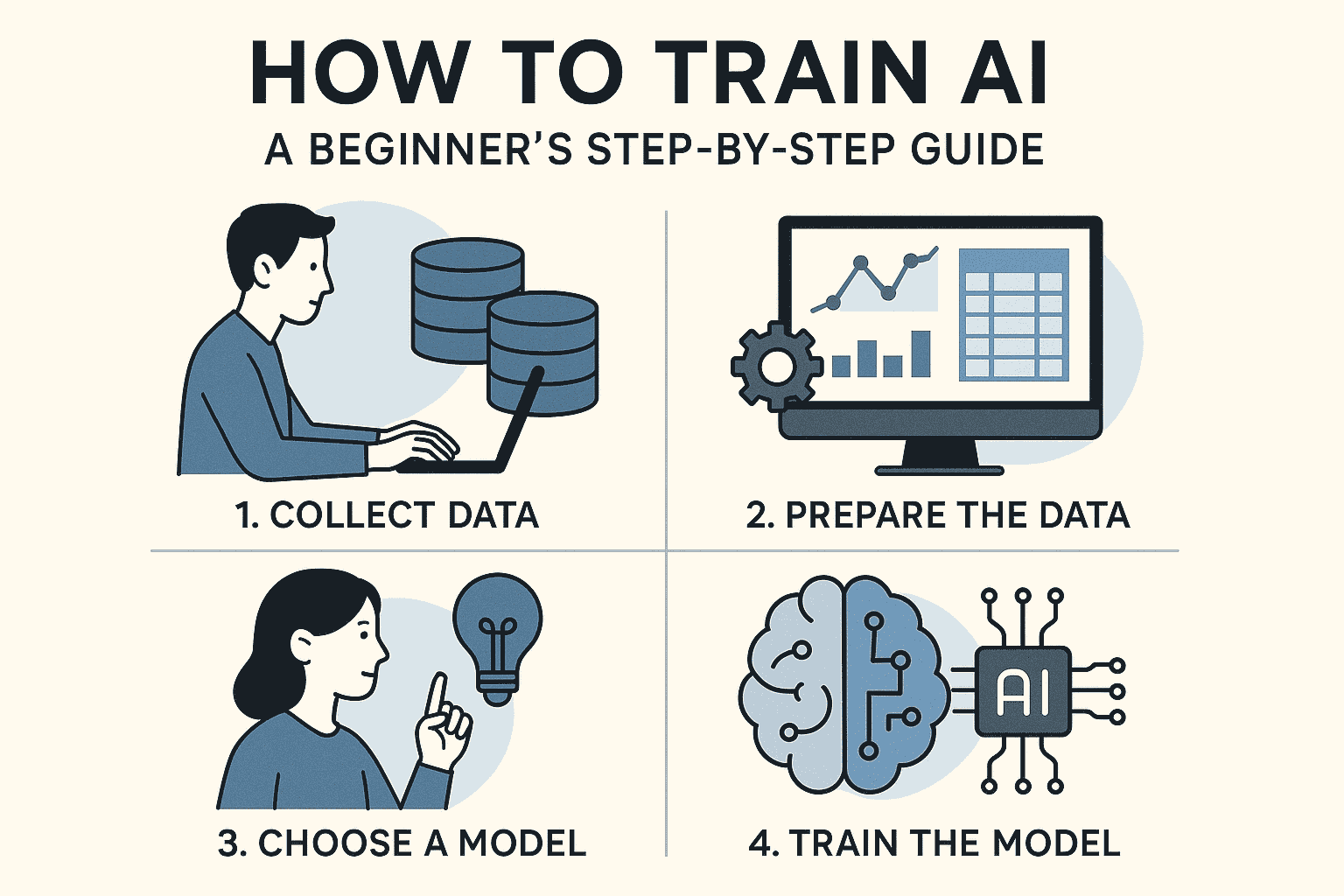

Step 3: Gather and Prepare the Data

Data is the fuel that powers AI. The better the data, the smarter your AI will be.

- Collect Data: Use existing databases, web scraping, sensors, or open-source datasets.

- Clean Data: Remove duplicates, fix errors, handle missing values, and normalize data.

- Label Data: For supervised learning, ensure data is labeled (e.g., “cat” or “dog” in an image dataset).

- Split Data: Divide into training (70%), validation (15%), and testing (15%) sets.

Pro Tip: High-quality, diverse, and well-labeled datasets improve model performance significantly.

Step 4: Choose the Right Algorithm or Model

Different problems require different AI models:

- Linear Regression: Predict numerical values.

- Logistic Regression: For binary outcomes (yes/no).

- Decision Trees & Random Forests: Great for classification tasks.

- Neural Networks: Useful for image recognition, speech, and complex tasks.

- NLP Models: Handle text analysis, chatbots, and sentiment analysis.

For beginners, tools like scikit-learn, TensorFlow, or PyTorch provide pre-built algorithms you can experiment with.

Step 5: Train the Model

Training is where the model learns patterns from data.

- Feed Data: Input training data into the model.

- Adjust Weights: Algorithms adjust parameters to minimize errors.

- Iterate: The process repeats across multiple training cycles (epochs).

- Hyperparameter Tuning: Adjust learning rate, batch size, and network layers to improve performance.

The more training cycles, the better the model adapts—up to a point. Overtraining can cause overfitting, where the model memorizes instead of generalizing.

Step 6: Evaluate the Model

After training, test how well the AI performs on unseen data.

- Metrics for Classification: Accuracy, precision, recall, F1-score.

- Metrics for Regression: Mean squared error (MSE), mean absolute error (MAE).

- Confusion Matrix: Shows correct vs. incorrect predictions.

Goal: Ensure the model performs well not only on training data but also on real-world test cases.

Step 7: Improve and Optimize

No AI model is perfect after the first run. Common optimization strategies include:

- Feature Engineering: Creating new features from existing data.

- Data Augmentation: Increasing dataset diversity (e.g., rotating images).

- Regularization: Preventing overfitting by penalizing complexity.

- Ensemble Methods: Combining multiple models for better accuracy.

Iterative improvement ensures that the AI model becomes more robust and reliable.

Step 8: Deploy the Model

Once the model meets performance goals, it’s time for deployment:

- Integration: Embed the AI model into applications or systems.

- APIs: Use frameworks like Flask or FastAPI to deploy models as services.

- Monitoring: Track real-time performance and retrain when necessary.

Deployed models need constant monitoring and updates, as real-world data often evolves over time.

Step 9: Maintain and Retrain

AI is not “train once and forget.” Continuous updates keep the model relevant:

- Retrain with New Data: Periodically update with recent datasets.

- Monitor Drift: Watch for changes in data patterns (data drift).

- Version Control: Track model versions for reproducibility.

Long-term maintenance ensures AI systems remain accurate, reliable, and trustworthy.

Beginner-Friendly Tools for Training AI

- Google Colab: Free cloud-based platform to run Python code.

- Scikit-learn: Beginner-friendly ML library.

- TensorFlow & PyTorch: Popular frameworks for deep learning.

- Keras: High-level neural network API built on TensorFlow.

- OpenAI APIs: Pre-trained models for NLP, text, and image tasks.

These tools reduce complexity, making AI accessible even for beginners.

Common Challenges Beginners Face

- Insufficient Data: Small datasets can lead to poor performance.

- Overfitting: Model performs well on training but fails on test data.

- Complex Algorithms: Choosing overly advanced models too early.

- Hardware Limitations: Some deep learning models require GPUs.

- Bias in Data: Poorly labeled or skewed data introduces bias.

Awareness of these challenges helps beginners avoid costly mistakes.

Conclusion

Training AI may seem intimidating, but by following a structured, step-by-step process, beginners can build and deploy functional AI models. From defining the problem and preparing data to selecting models, training, and deployment, each stage plays a vital role in creating a reliable AI system.

With practice, experimentation, and continuous learning, anyone can master AI training and contribute to the future of intelligent technology.